Project Overview

Type: Internal GenAI Insight tool

Team: Fay Cai, Iris Bierlein, Engineer team.

Role: AI Product Strategist & Architect

Tools: Google Sheets + Apps Script + OpenAI API

Timeline: 02/2025 — 04/2025

A lightweight internal GenAI insight system for NYU Libraries UX team that transformed unstructured user feedback into semantically and behaviorally organized intelligence. It reduced manual tagging time by 90%, uncovered trends across cohorts, and enabled two major UX interventions—all without introducing a separate product UI.

Impact & Outcome

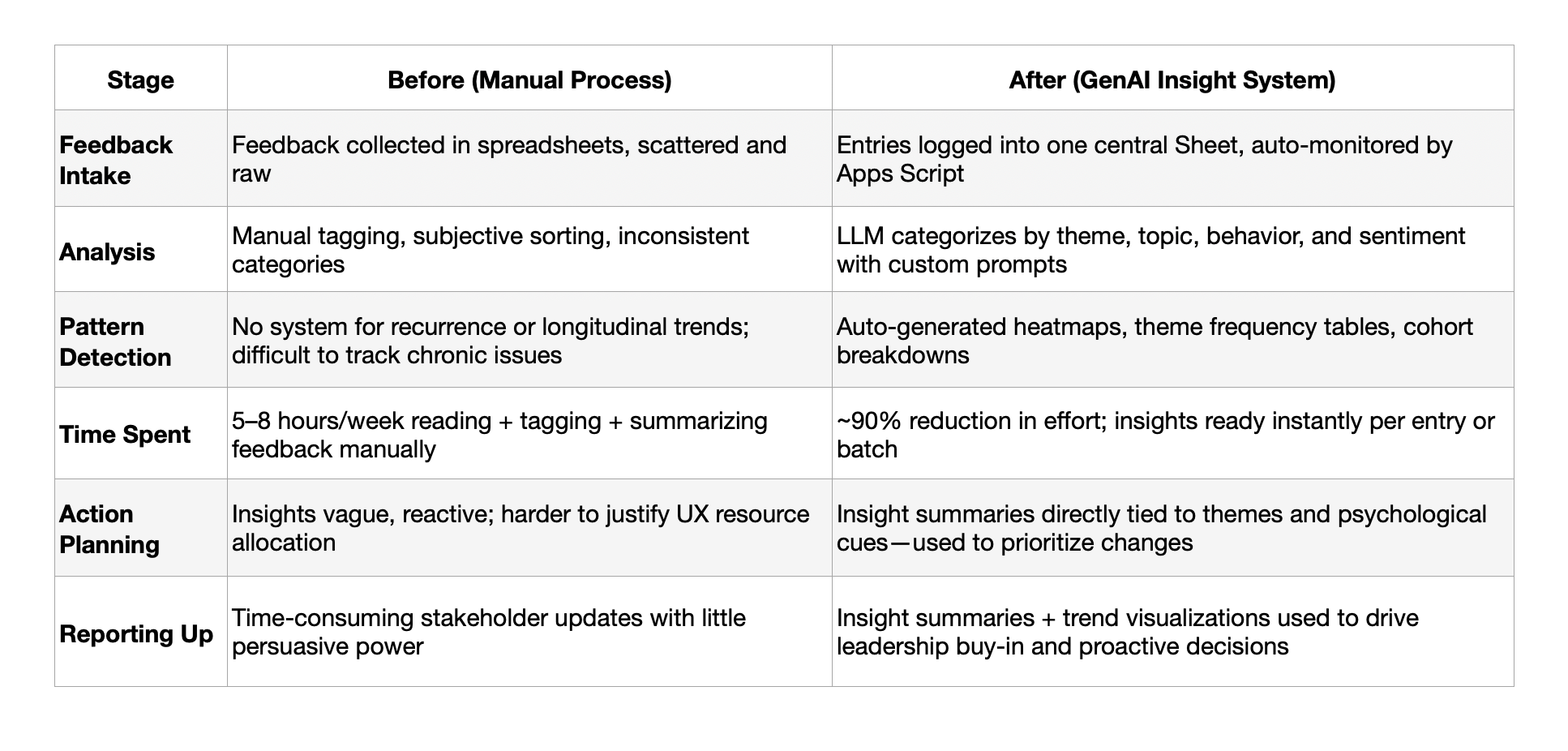

• 90%+ reduction in manual tagging

• 2 new UX interventions in progress

• Shifted UX team’s workflow from reactive to proactive

• Recurring themes surfaced across time

• Callout quote by UX Director Lisa Gayhart:

“This system made it so persuasive and powerful for us to advocate for UX changes to the leadership team.”

Key Takeaways

• AI is most powerful when it augments existing habits, not replaces them.

• Adoption thrives on zero-friction design—a well-integrated workflow beats flashy new UI.

• UX strategy is not just about screens—it’s about mental models, systems, and behavioral cues.

• Small tools can drive big change when they align with users' actual cognitive workflows and pain points.

• Insight design is not just about visualizing data—it’s about interpreting it with nuance, prioritizing it with context, and transforming feedback from noise into navigation. This empowers UX teams to act confidently—identifying patterns, justifying interventions, and advocating for system-level improvements with speed and clarity.

• Explainability is baked in: Each insight is grounded in behaviorally tagged outputs, fostering trust and transparency in how decisions are made.

What's the Problem?

The UX team collected large volumes of user feedback from different cohort each quater—but relied on:

• Manual categorization (inconsistent)

• Basic sentiment scoring (often inaccurate or vague)

• No system for recurrence detection (hard to spot chronic vs. emerging issues)

Design Goal

To guide a large language model (LLM) to infer intent, emotion, and behavioral patter from unstructured user feedback using principles from cognitive and behavioral science, and to design a lightweight, maintainable internal tooling system that extracts:

• Recurring unmet needs

• Suggestions and support patterns

• Latent behavioral insights with explainable layer

—all without introducing a new product interface or dashboard, but instead leveraging

existing workflows (Google Sheets + Apps Script) to empower the UX team with actionable,

psychologically-aware intelligence.

User

Story

"As a UX manager, I need a simple, private, low-maintenance system that helps me understand what users are consistently struggling with—without reading every single feedback—so I can prioritize fixes and advocate for changes more efficient and consistently within the organization."

Solution: GenAI-Powered Insight System (Inside Google Sheets)

Input: A raw feedback sheet with timestamped, anonymous user entries across multiple cohorts.

Automation Pipeline:

1. Trigger & Connection: Google Apps Script monitors new entries and connects to the OpenAI API through a custom add-on menu—enabling non-technical users to initiate analysis on demand.

2. LLM-Powered Processing: Custom-engineered prompts guide the LLM (GPT-4) to return structured outputs

• Semantic Category (e.g., Complaint, Suggestion, Positive Feedback)

• Precise Topic (e.g., “bathroom,” “wifi,” “staff,” etc.)

• Behavioral Intent & Psychological Cue (e.g., loss of control, unmet need, motivation blocker)

• Insight Summary for immediate pattern recognition

3. Data Parsing & Output:

Responses are parsed and written directly back into structured columns—transforming the raw Sheet into a live, intelligent insight dashboard.

Output & Interface: The Sheet becomes a lightweight yet powerful UX intelligence system with:

• Filterable, tagged feedback for fast qualitative review

• Topic-level clustering to map recurring themes

• Cohort-based analysis (e.g., quarter-over-quarter segmentation)

• Dynamic heatmaps to visualize frequency and persistence

• Trend pivots to detect chronic vs. emerging pain points

Why this Approach Works:

• Zero Adoption Friction: No new platform—integrates directly into an existing toolchain (Google Sheets)

• AI-Native Intelligence: Every feedback entry is semantically and behaviorally analyzed for deeper insight

• Action-Oriented Design: Insight is immediately usable for UX prioritization and stakeholder reporting

• Cost-Conscious Optimization: Batching logic and token-aware prompt design reduce OpenAI API costs while maintaining output quality

• Feedback Loop & Prompt Adaptation: Users can flag unclear tags or summaries. These are reviewed weekly to refine prompts. A semi-automated feedback integration (via Sheets comments or “Flag for Review” columns) is on the roadmap.

• Model Reliability & Failure Modes: Prompt safeguards mitigate hallucinations, with fallback tagging for edge cases. Emotion inference is anchored in language cues only. System flags ambiguous entries for human QA.

• Security & Data Privacy: Feedback is anonymized and securely handled within the team’s Google Workspace. No personal or sensitive information is passed to the LLM.

Key Design Principles

• Behavior-First Prompting: Aligns LLM reasoning with human affect models

• Workflow-Native Design: No new tools, just smart layers on top of existing ones

• Time-Sensitive Insight: Uses cohort mapping to distinguish chronic vs. transient issues

• Low-Friction Collaboration: Designed for non-technical UX stakeholders

Add-on Menu through Google App Script Allow non-technical team member easy to operate the system

Stack Overview

Tools

Google Sheets: Primary interface and data store for UX teams

Google Apps Script: Custom trigger, menu add-on, and automation logic

OpenAI API (GPT-4): LLM-powered inference engine

Prompt Engineering Framework: Token-efficient, behavior-tagged prompt templates

Visualization Layer: Dynamic heatmaps, pivot tables, and cohort-based filters

System Components

Trigger & Add-on Menu: Allows non-technical users to run on-demand analysis directly within Google Sheets.

LLM Insight Engine: Uses engineered prompts to extract semantic category, behavioral intent, and latent emotional signals.n on-demand analysis directly within Google Sheets.

Structured Output Writer: Parses LLM response into clean, column-based tagging for immediate use.

Insight Visualization: Auto-generated heatmaps and cohort filters surface chronic vs. emergent UX issues.

Feedback Loop: UX managers provide prompt-level feedback; outputs are reviewed and updated for alignment with evolving context.

Reliability Guardrails: Prompt templates include fallback constraints to mitigate hallucination and overgeneralization.

Security & Privacy: Anonymous, timestamped input with no PII; API access governed by internal token controls.

Advanced Prompt Engineering:

Cognitive Design Layer— Prompt as Behavioral Scaffold

To move beyond sentiment and extract actionable insight, I embedded behavioral psychology principles directly into the prompt. This allowed LLM to:

• Detect user frustration signals

• Identify latent needs (not just surface complaints)

• Categorize intent through a behavioral lens

Behavioral Dimensions Used in the Prompt:

Behavioral_Intent: Based on intent inference from behavioral economics and affective computing.

Psychological_Cue: Inspired by self-determination theory, BJ Fogg’s behavior model, and affective UX research.

Insight_Summary: Designed to reflect latent needs, not just surface issues (e.g., “seeking control over environment”).

Feedback Loop & Prompt Adaptation

Manual-to-Semi-Automated Feedback Cycle

• After initial deployment, UX managers were invited to flag incorrect or ambiguous insight tags directly in the Google Sheet output.

• I implemented a lightweight column-based feedback mechanism (e.g., “✓ accurate” / “flag for revision”), which was reviewed weekly to refine prompt structure and categorization logic.

• Patterns in flagged outputs were used to manually adjust prompt templates and clarify instructions for the LLM—essentially forming a closed-loop refinement cycle.

• The roadmap includes a semi-automated version of this: flagged samples will feed into a curated fine-tuning dataset or be used to test prompt variants using GPT's function-calling and evaluation tools.

Security & Data Privacy

Privacy-Safe by Design

• The tool was deployed on local Google Workspace instances with secure access control (limited to NYU Libraries UX team and myself).

• No personal identifiers or private data were used; feedback was anonymized at the collection point (user surveys & transcripts).

• Prompt content never included names, IDs, or institutional details, and only summary-level behavioral descriptions were processed by the LLM.

Model Reliability & Failure Modes

Observed Challenges and MitigationsHallucination Risk:

• Early testing showed the LLM occasionally inferred emotion from tone rather than content. I mitigated this by explicitly defining emotion inference anchors in the prompt (e.g., “only infer emotion if text includes subjective language or self-reflection”).

• Bias in Attribution: The model sometimes over-indexed on negative sentiments. I added behavioral counter-balancers to encourage neutral-to-positive reframing when applicable.

• Token Efficiency Tradeoff: To manage cost and ensure consistent output, I chunked longer transcripts and batched queries using a summary+follow-up pattern rather than full-length feeds.

• Fallback Strategy: For ambiguous or edge-case queries, the system generated an “⚠️ Flagged for Human Review” tag—making human oversight part of the loop.

Example Insights Generated by the AI-powered System

Information Architecture & System Flow (Automated)

User Journey: Before vs. After

Future Vision for the System

While the current system is built inside Google Sheets for speed and adoption, its architecture and insight model can be extended to other platforms and scaled as a more robust internal AI product.

Expansion Opportunities:

• Jira/Monday Integration

Auto-create UX tickets from GenAI-tagged insights, assign priority based on frequency or severity, and track resolution loops.

• Notion or Confluence Integration

Push insight summaries into team knowledge bases for context-aware documentation and product rituals.

• Multi-model LLM Layer

Use additional models (Claude, Mistral, etc.) for cross-validation, bias detection, or multilingual feedback support.

• Insight Feedback Loop

Allow UX managers to give feedback on insight quality, retrain prompt design dynamically, and improve accuracy over time.